Cybersecurity Powered by IA

2Rai is not just a tool; it’s a revolution in how cybersecurity is approached and executed, driven by cutting-edge AI.

Harnessing the Power of LLMs

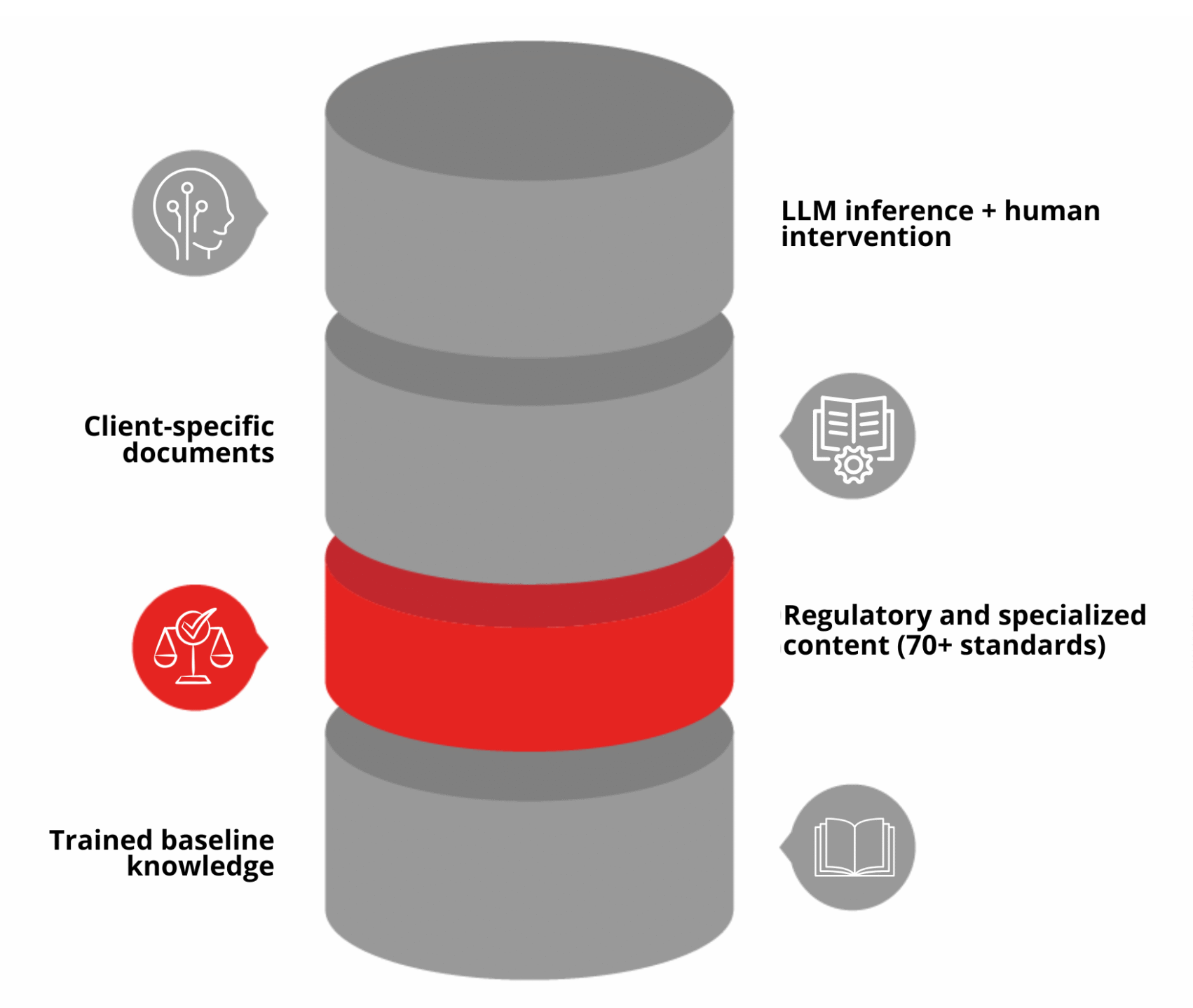

An LLM (Large Language Model) is a type of artificial intelligence that reads and produces human language. Think of it as a very well‑read assistant: it has absorbed large amounts of text so it can understand questions, find relevant context and compose clear, natural replies.

In the context of 2Rai, the LLM is the conversational engine behind the assistant. It combines its broad language understanding with the specific documents you provide to produce contextual answers, summaries and recommendations tailored to your situation.

Trusted data, trusted answers

Inside the Training of 2Rai

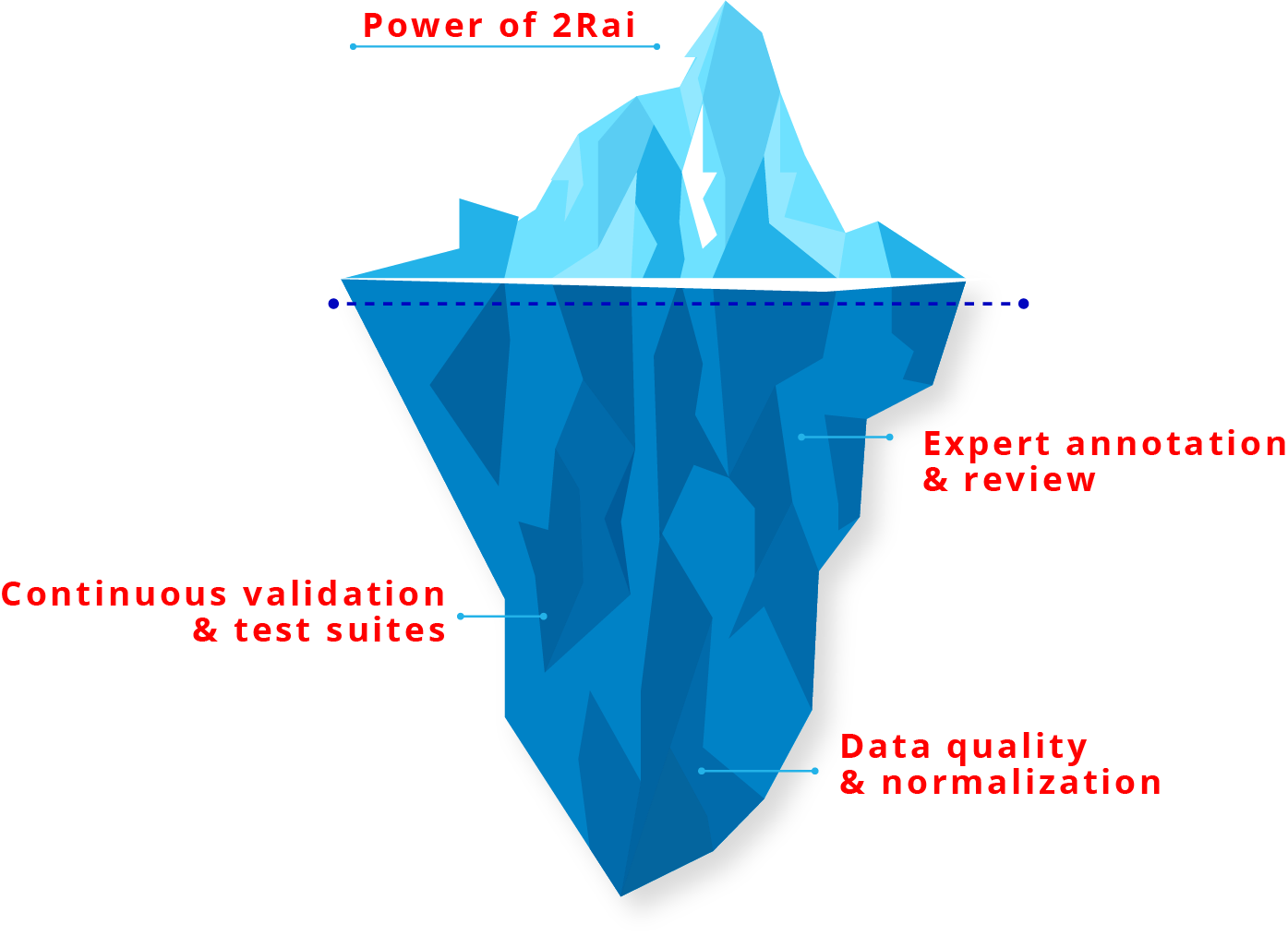

The strength of 2Rai lies in its expert-driven training process. Through meticulous tuning and validation, our model is crafted to deliver reliable insights tailored to your security needs.

We harness a vast array of regulatory frameworks to guide training, ensuring outputs are both compliant and actionable, while each interaction aligns with industry best practices, offering both security and assurance.

Quick Wins for Security Teams

Faster decision-making

Turn long reports into concise, actionable summaries for executives and technical teams.

Consistent procedures

Generate standardized playbooks and checklists to reduce variability in incident response.

Efficient audit readiness

Save time on evidence review by extracting key findings, quotes, and tables, while producing traceable summaries that support compliance reviews.

Enhanced accuracy

Improve precision in data analysis and reporting, ensuring dependable insights for security strategies.

Improved cross-team communication

Translate technical findings into business-friendly language for compliance and leadership.

Onboarding and knowledge retention

Use the assistant as a searchable, conversational knowledge base for policies and procedures.

Proof in Numbers

Sources:

- Cost of a data breach 2025 | IBM. (s. f.). https://www.ibm.com/reports/data-breach

- The 2025 AI Index Report | Stanford HAI. (s. f.). https://hai.stanford.edu/ai-index/2025-ai-index-report

- Olivera, J. M. (2025, 15 abril). Enhancing regulatory compliance in the AI age by grounding documents with generative AI. IBM. https://www.ibm.com/think/insights/enhancing-regulatory-compliance-ai-age